The Role of API Testing in the Digital World

by Eggplant, on 11/10/17

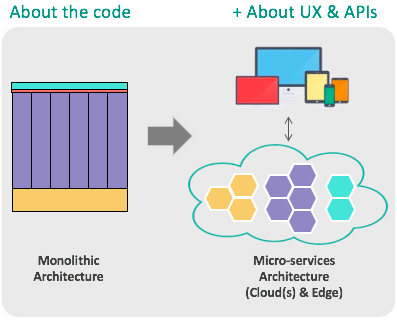

Consumerization, digital experience, DevOps, mobile, fragmentation, and microservices have changed how software products are architected, how they’re produced, what they do, who uses them, and those users’ expectations. As a result, there’s been a massive shift in testing requirements, both in terms of what we’re trying to achieve and what we need to do.

Case in point: microservices architectures.

In the old days, we created software from the ground up, where the in-house development team wrote the whole app. In that environment, the team spent 90 percent of their time creating generic middleware (like communication layers, data layers, user management, etc.)—so, generic middleware classes were what we tested.

Now, we create digital services composed via APIs from existing microservices which then come together at the user. From the tester’s perspective, we need to ensure that those services behave as expected—via the API—and that they’re delivering the right experience to the user from the user perspective.

The UX Gap

In a recent survey* we commissioned, 86 percent of respondents said they were meeting their test objectives but only 18 percent felt they were actually meeting customer objectives.

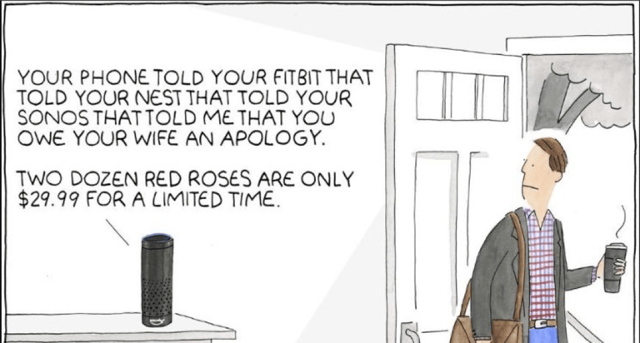

This cartoon illustrates the point that the user experiences we’re delivering now are composed of lots of different elements—some that we make but many that we don’t. And our current approaches to testing are really all about testing the code we create, not the user experience. So, it’s not surprising that we’re not delivering great user experiences.

Testing today isn’t about UI testing or API testing—it’s about doing both together. It’s about ensuring that an API call to a microservice triggers the right impact on the user experience. Put another way, it’s about performing some user action and ensuring that it’s instantly visible via the API, so you have a consistent view.

UX and Orion

The Orion spacecraft that’s being built by Lockheed Martin for NASA is a great example of why testing needs to be more user-centric and why we need to adapt testing methods to modern architecture.

Orion will carry a crew of four astronauts on deep space missions to explore asteroids and Mars, and it’s the first spacecraft to include an entirely digital cockpit display (called a glass cockpit) to monitor spaceflight and give instructions to the crew. A graphically rich system designed to provide better spacecraft monitoring and improve communication between the spacecraft and the control center, the advanced display also significantly reduces the weight of the cockpit. The monitoring display will take in information from myriad sources—including hundreds of sensors, different in-ship systems, and ground control—and aggregate, process, and present the data.

This critical, highly complex display system is built using a microservices-type architecture where the only point of confidence is the user interface. When you’re drifting in deep space between Earth and Mars, you really want to know that the information you’re seeing on the screen is accurate. Not that the network message from the sensor to the cockpit was formatted correctly but has actually been corrupted before being displayed on the screen.

In the old days, this would have to be tested manually. But with our entirely user-centric approach to testing that really tests through the eyes of the user, Lockheed Martin can use automated testing to test the display—shrinking delivery time by months and gaining a lot more flexibility.

It’s these kinds of potential results that are so exciting, and why we’ve added API testing to our Digital Automation Intelligence Suite. Our latest release isn’t about fundamental changes to API testing. It’s about the ability to seamlessly combine UI and API testing to do proper end-to-end testing and really test modern apps, which compose microservices via APIs into digital experiences.

Plus, it’s also very exciting that our software gets to play a role in testing elements of a spacecraft that will take human astronauts farther than they’ve ever gone before.

*Kickstand survey of 750 testers in the U.S. and the U.K. conducted on behalf of Testplant, Aug 2017.